Other Parts Discussed in Thread: TCAN4550

hi,

tcan4550调试,从linux-5.14版本获取了tcan4x5x的驱动,调试发现如下问题:

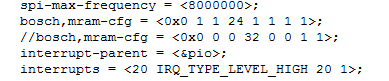

1、设备驱动在读取ID作为匹配时,调用tcan4x5x_read_reg,此函数在地址位中对所有地址偏移加0x1000,发送地址数据为0x41100001,而根据手册实际应该为0x41000001,修改后读取ID正常;

2、根据m_can_check_core_release()中读取ID后的逻辑判断,读取ID0的ID根据手册与实际测量结果应该为0x4e414354,恒无法满足后面的版本判断的30--32的判断逻辑,我推测是否要读取ID1的值0x30353534,后面逻辑判断为30,符合后面版本的判断;

3、驱动修改部分后能够正常加载,结合can设备加载成功后在应用层socket调用,应用程序write提示“No buffer space available”;

4、spi为大端传输(spi问题),芯片无响应,修改后响应正常;

目前未解决的问题是3。